Muhammad Bilal

Robotics & AI Researcher

About

I am a graduate student at the University of Melbourne, Australia, with a research focus on robotics and human-centered intelligent systems. My work explores how robots can learn effectively from human input and interact with novices in intuitive and meaningful ways.

Previously, I served as a Research Assistant and later as Team Lead at the Human-Centered Robotics Lab, National Center of Robotics and Automation (NCRA), Pakistan.

Education

University of Engineering & Technology, Lahore, Pakistan.

News

Mar 16, 2026 — Our paper has been accepted for publication at HRI 2026 (Click here for full paper).

Feb 02, 2026 — Successfully completed PhD Final Seminar.

Dec 18, 2025 — Delivered an invited talk at Monash University (thanks to Dr. Michael for the invitation).

Dec 02, 2025 — Our paper has been accepted for publication at HRI 2026.

Nov 21, 2025 — Presented a robot demonstration at the 70th CIS School Anniversary.

Apr 20, 2025 — Successfully completed the second-year PhD progress review with satisfactory outcome.

Mar 13, 2025 — Attended the HRI 2025 Conference in Melbourne.

Jun 30, 2024 — Our paper has been accepted for publication at IROS 2024 (Click here for full paper).

Apr 20, 2024 — Passed PhD Confirmation.

Selected Publications

Conference Proceedings:

- Muhammad Bilal, Tharaka Ratnayake, D. Antony Chacon, Nir Lipovetzky, Denny Oetomo, Wafa Johal. “Design and Evaluation of AR-Based Real-Time Feedback System for Kinesthetic Robot Teaching” ACM Designing Interactive Systems 2026.

[In Review] - Muhammad Bilal, D. Antony Chacon, Nir Lipovetzky, Denny Oetomo, Wafa Johal, “Investigating the Impact of Robot Degree of Redundancy on Learning from Demonstration,” In 2026 21st ACM/IEEE International Conference on Human-Robot Interaction (HRI), 2026, doi: 10.1145/3757279.3785606.

[In Press] - Muhammad Bilal, Nir Lipovetzky, Denny Oetomo and Wafa Johal, “Beyond Success: Quantifying Demonstration Quality in Learning from Demonstration,” In 2024 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2024, pp. 5120-5127, doi: 10.1109/IROS58592.2024.10802187.

- Syed Ali Huzaifa, Abdurrehman Akhtar, Meeran Ali Khan, and Muhammad Bilal, “Detection of Parkinson’s Tremor in Real Time Using Accelerometers,” In IEEE 7th International Conference on Smart Instrumentation, Measurement and Applications (ICSIMA), 2021, pp. 5-9, doi: 10.1109/ICSIMA50015.2021.9526327.

- Muhammad Bilal, Ali Raza, Mohsin Rizwan et al. “Towards Rehabilitation of Mughal Era Historical Places using 7 DOF Robotic Manipulator,” In 2019 International Conference on Robotics and Automation in Industry (ICRAI), 2019, pp. 1-6, doi: 10.1109/ICRAI47710.2019.8967360.

Journal Articles:

- Muhammad Bilal, Mohsin Rizwan et al. Design Optimization of Powered Ankle Prosthesis to Reduce Peak Power Requirement,” Science Progress, Vol. 105(3), pp. 1 – 16, 2022. 10.1177/00368504221117895.

- Muhammad Bilal, M. Nadeem Akram, and Moshin Rizwan, “Adaptive Variable Impedance Control for Multi-axis Force Tracking in Uncertain Environment Stiffness with Redundancy Exploitation,” Journal of Control Engineering and Applied Informatics, Vol.24(2), pp. 35 – 45, 2022.

Research Projects

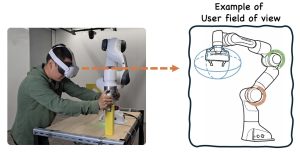

Feedback System for Kinesthetic Robot Teaching

Robots have the potential to assist humans not only in industrial tasks but also in everyday life tasks. However, programming robots typically require advanced technical expertise, which limits access for novice users. Learning from demonstration addresses this challenge by allowing novice users to teach robots new skills through physical demonstrations rather than writing sophisticated code, thereby promoting the democratization of robotics. Prior research has shown that novice users often provide suboptimal demonstrations, which can reduce the robot ability to learn and execute the desired task effectively. To address this issue, we aim to design an augmented-reality based real-time feedback system that supports users during demonstrations, enabling an effective communication between the human demonstrator (teacher) and the robotic system (learner).

System Design and Evaluation

Initially, it is important to identify potential ways to visualise the desired information content, aiming to reduce cognitive load while maximising teaching efficiency during demonstrations. Based on insights from focus group study (N=9), we designed the feedback system using Unity Engine and then we conducted a between-subject user study (N=36) to evaluate the system.

We found that proposed real-time feedback system improves multiple aspects of human performance, including mental workload, demonstration completion time, and task completion rate, compared to the conventional offline feedback system that only shows what the robot has learned after several demonstrations rather than assisting users during the demonstrations process itself.

This paper has been submitted to the ACM Designing Interactive Systems (DIS) 2026 conference and is currently under review.

Quality-Aware Robot Learning from Failed and Suboptimal Demonstrations

Learning from Demonstration is a common approach that enables novice users to teach robots new skills through demonstrations rather than writing complex code. However, novice users often provide suboptimal demonstrations or even failed attempts, which can reduce the robot ability to effectively learn and execute tasks. Prior research has explored learning from such demonstrations with a focus solely on achieving robot task completion (i.e., binary notion of robot success or failure using learned model); however, these approaches often overlook the quality of task execution (i.e., how well the robot performs the task).

Method and Evaluation

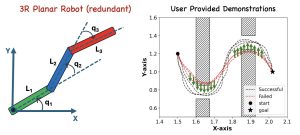

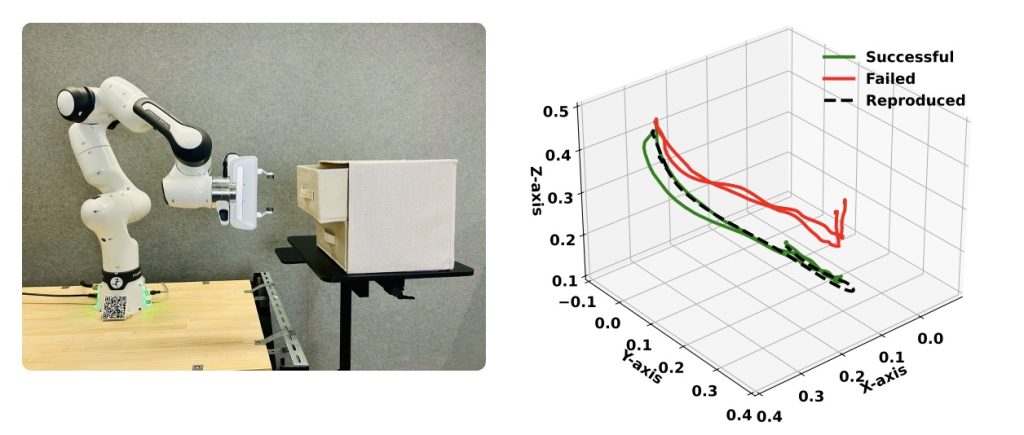

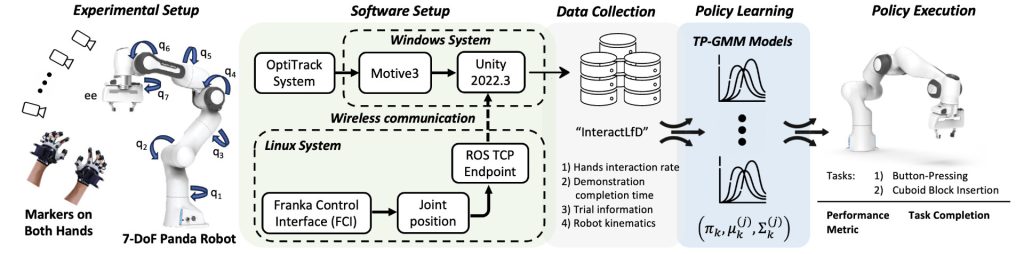

In this work, we present a novel quality-aware LfD framework that leverages a revised weighted Gaussian Mixture Model (GMM) to learn from both failed and suboptimal demonstrations. The proposed algorithm adjusts the GMM components by repelling its away from failed demonstrations and attracting them toward successful demonstrations.

The proposed framework not only ensures task completion but also guides the system toward high-quality task execution. We validated our approach through extensive 2D simulations with a 3R planar redundant robot and then 3D real-world experiments using a Franka 7-DoF Panda robot.

This paper is in preparation for submission to IEEE RAL.

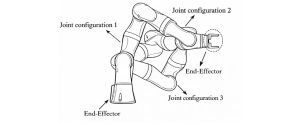

Impact of Robot Degree of Redundancy on Learning from Demonstration

Abstract: Learning from Demonstration allows robots to acquire skills from human demonstrations, making them more accessible to a wider range of users. Among different approaches, kinesthetic teaching allows humans to manipulate the robot joints directly, making it effective method for demonstrating constrained tasks. However, robots with kinematic redundancy enable multiple joint configurations to achieve a desired task, which could influence human teaching performance. One one hand, it could make it easier, allowing more freedom to demonstrate the task, but on the other, it also increases the number of joints that needs to be manipulated, potentially affecting cognitive and physical load of the demonstrator. Therefore, it is crucial to investigate how the number of degrees of redundancy (DoR) impact human performance during kinesthetic demonstrations, and then how these demonstrations influence robot performance. We simulated high and low DoR by locking one of the robot joint on a 7-DoF Panda robotic arm. We conducted a within-subject user study (N = 24) with two conditions: unconstrained condition (high DoR) and constrained condition (low DoR). We used a motion capture system to capture participants physical interaction with the robot when demonstrating two tasks: button pressing and cuboid block insertion. The results show that the robot’s DoR significantly affects mental workload, demonstration time, number of failed attempts, and physical interaction with the robot. Likewise, joint constraints significantly influence robot performance, measured by task completion using the learned model. These findings highlight the importance of considering robot DoR during demonstrating constrained tasks, allowing novice users to provide effective demonstrations.

Study Overview

User Experiment Videos

Button Pressing Task

Cuboid Block Insertion Task

Key Takeaways

Providing novice users with higher robot redundancy (more DoF than strictly required) helps them identify suitable joint configurations within a larger feasible solution space.

The sequence of hand contact points contains valuable information that can be used to anticipate and prevent potential failures.

Citation

Muhammad Bilal, D. Antony Chacon, Nir Lipovetzky, Denny Oetomo, Wafa Johal, “Investigating the Impact of Robot Degree of Redundancy on Learning from Demonstration,” In 2026 21st ACM/IEEE International Conference on Human-Robot Interaction (HRI), 2026, doi: 10.1145/3757279.3785606.

[In Press]

Articles

Introduction to Robot Learning from Demonstration

Robots are no longer limited to factory floors. They are increasingly used in healthcare, homes, and everyday environments to assist people with a wide range of tasks. However, programming robots is still difficult. Most robotic systems require expert knowledge and complex coding, which makes them hard to use for non-experts. This creates a major barrier for wider adoption.

To overcome this challenge, researchers developed a method called Learning from Demonstration (LfD). Instead of writing code, users teach robots new skills by showing them how to perform a task. This makes robot programming more intuitive and helps make robotics accessible to a broader range of users.

Limitations of Traditional Approaches

Traditional robot programming requires users to carefully define every action step using code. This process is time-consuming and requires specialized expertise.

Motion planning methods reduce the need to specify exact trajectories, but they still require precise instructions such as goal positions and waypoints. These programs are often rigid and need to be rewritten when the environment changes.

Reinforcement learning offers more flexibility, but it introduces its own challenges. Designing a suitable reward function usually requires deep domain knowledge, and training often takes a long time. This makes reinforcement learning difficult to apply in real-world settings.

Because of these limitations, Learning from Demonstration becomes especially attractive—particularly when a task is hard to describe using rules or rewards, or when manual programming is impractical.

Ways Humans Can Demonstrate Tasks

There are several ways users can demonstrate tasks to a robot, depending on how they interact with the system.

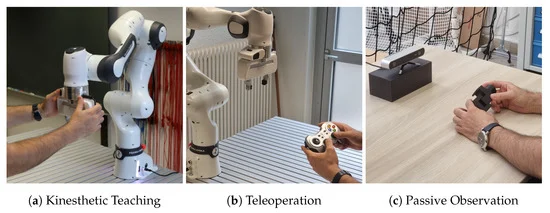

Kinesthetic Teaching

Kinesthetic teaching allows users to physically guide the robot by moving its joints directly. This method does not require extra sensors or equipment, making it simple and intuitive.

It is particularly well suited for robotic manipulators such as the KUKA iiwa (7-DoF) and Franka Emika (7-DoF) robots, where users can easily demonstrate precise motions by hand.

Teleoperation

In teleoperation, users control the robot using a joystick or remote controller. Typically, the user controls only the robot’s end-effector, while the robot computes the joint movements using inverse kinematics.

This approach is useful for robots with many joints, such as humanoid or highly redundant robots, where controlling each joint directly would be overwhelming. However, computing inverse kinematics for high-degree-of-freedom robots is mathematically challenging.

Passive Observation

In passive observation, users perform a task naturally without directly interacting with the robot. Human motion is captured using tools such as 3D cameras or motion-capture systems.

This method is easy for humans, but difficult for robots. Extracting meaningful task information from raw human motion data and transferring it to a robot is a complex problem.

Key Goals of Learning from Demonstration

A central goal of LfD is to enable robots to learn tasks quickly and reliably from only a small number of demonstrations. Achieving this requires improvement in two main areas:

- Learning algorithms – how effectively the robot can learn from the data

- Demonstration quality – how informative and well-structured the human demonstrations are

Improving both aspects is essential for building robotic systems that are easy to teach, adaptable, and practical for real-world use.